Migrating from Ghost to Next.js: A Journey with Claude and Cursor

How I migrated blog.rezvov.com from Ghost CMS to Next.js 16 with the help of Claude Code and Cursor IDE, including full CI/CD setup, newsletter automation, and comprehensive documentation for future LLM interactions.

The Future of User Interfaces and the Role of AI

User interfaces are collapsing to two surfaces: REST APIs for agents, and a single window for people. Behind the second one sits a state machine, not just a smart prompt.

Alex Rezvov Blog Now in Telegram! Tour for New Readers

I added Telegram as the fourth crosspost target. Same git push, four places. Here's how the piece fits in, and a curated tour of the blog for anyone who just found it.

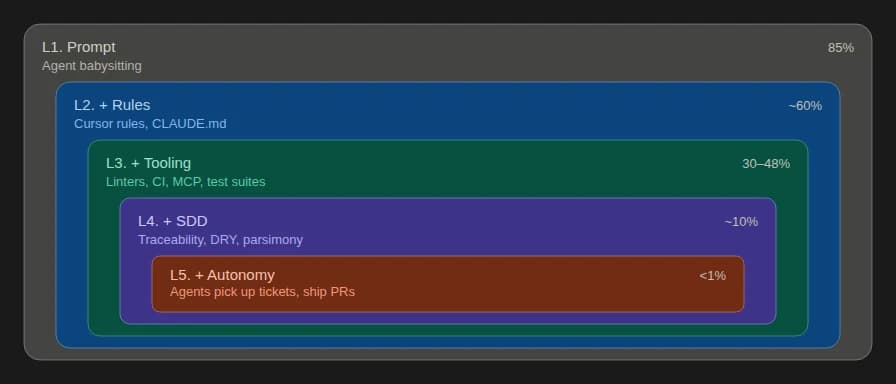

Five Levels of AI-Agent Adoption in Software Development Teams

From Stack Overflow replacement to autonomous agents. A practitioner's classification based on 100+ engineers across 12 active projects.

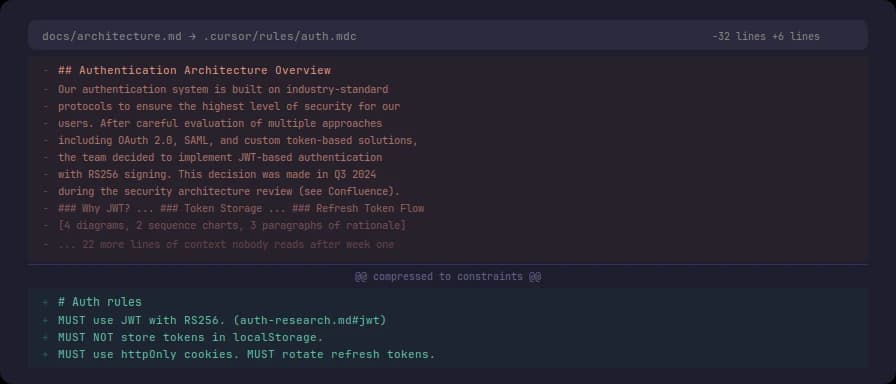

Less Documentation, More Signal

Text generation became free, so volume stopped signaling quality. Less documentation, written where the tools look, in directive vocabulary, under constant relevance audit — works better for both humans and LLMs.

Five Weeks with a Next.js Blog: What Got Built

On February 15 I published the migration post. Five weeks later the blog has SEO, LLM SEO, RSS, cross-posting to Dev.to and Hashnode, a newsletter system, full-text search, and automated deployment. Here's what exists and why.

What CTOs Actually Said When I Asked About Rust and LLMs

I asked a group of CTOs what languages they use for LLM-assisted development. The answers split three ways. One of them made me rethink why Rust works so well with AI tools.

Build vs. Buy for Agent Harnesses: The Real Question

Every CTO in the agent harness space is asking build vs. buy. Most are asking the wrong question.

Rust and LLMs: The Compiler Does What Code Review Shouldn't Have To

Rust's biggest barrier was the learning curve. LLMs reduced it dramatically. The compiler, the type system, and the ownership model stayed. That combination matters.

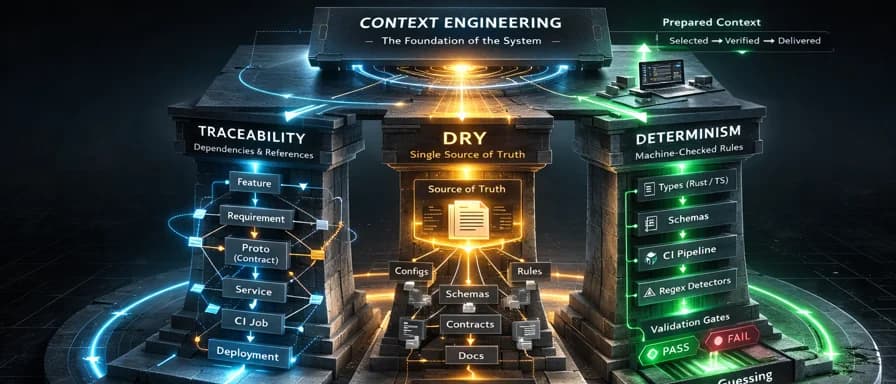

Specification-Driven Development: The Four Pillars

A practical framework for AI-assisted software development built on four non-negotiable principles: traceability, DRY, deterministic enforcement, and parsimony.

OpenClaw Troubleshooting: 'No Reply from Agent,' WORKFLOW_AUTO.md, and Silent Delivery Failures

Practical fixes for OpenClaw's most common issues: the 'no reply from agent' error, WORKFLOW_AUTO.md phantom file hallucination, and cron jobs that report delivered:true but send nothing.

Deploying OpenClaw: 16 Incidents, One Day, $1.50

My client got hyped about autonomous AI agents and asked me to deploy OpenClaw on a VPS. 10 hours, 16 incidents, 271 spam messages, and $1.50 later, we got a working daily digest. Here's what nobody tells you about running AI agents in production.

LLMs Changed the Rules: Git for Everyone, SQL for Everyone, Rust for Almost Everyone

LLMs didn't just make coding faster. They unlocked entire toolsets for people who never had access before. Git, SQL, regex, shell scripting, even Rust. The barriers were syntax and CLI complexity, not intelligence. LLMs removed exactly that barrier.

Principle of Parsimony in Context Engineering

The Principle of Parsimony in Context Engineering is a design rule for LLM prompts and context: formulate instructions and select artifacts in the minimum sufficient number of tokens that ensure unambiguous task interpretation, reserving the remaining token budget for the most valuable elements.

When Prompt Engineering Stops Being Enough

I use Claude and Cursor in development every day. Over time I noticed that I don't have one fixed workflow. I choose the approach depending on the task. Three real examples show when prompt engineering is enough and when you need context engineering.

Opus, Gemini, and ChatGPT Walk Into a Bar

A joke about AI model personalities, fact-checked against real user feedback. Plus Opus writes its own take from the inside.

Making Your Blog LLM-Friendly: Implementing llms.txt and Markdown Serving

A continuation of our Next.js blog migration journey: implementing llms.txt catalog and serving markdown versions of posts for LLM indexers like Perplexity and ChatGPT, with complete technical breakdown and lessons learned.